Basics

- True Positives (TP): actual 1, prediction 1

- True Negative (TN): actual 0, prediction 0

- False Positive (FP): actual 0, prediction 1

- False Negative (FN): actual 1, prediction 0

Accuracy

$\text{Accuracy} = \frac{TP + TN}{TP + TN + FP + FN}$

Recall

Out of all True observations how many were actually True.

$\text{Recall} = \frac{TP}{TP+FN}$

Use when false negatives are costly (cancer detection, criminal detection).

Precision

Out of all True predictions how many were actually True.

$\text{Precision} = \frac{TP}{TP+FP}$

Use when false positives are costly (spam filter, fraud detection).

High threshold leads to high precision while recall decreases.

F1-Score

Harmonic Mean of Precision (P) and Recall (R):

$F1 = \frac{2 \cdot P \cdot R}{P+R}$

R2 Score

Range: [0, 1]. 1 is good, 0 is bad.

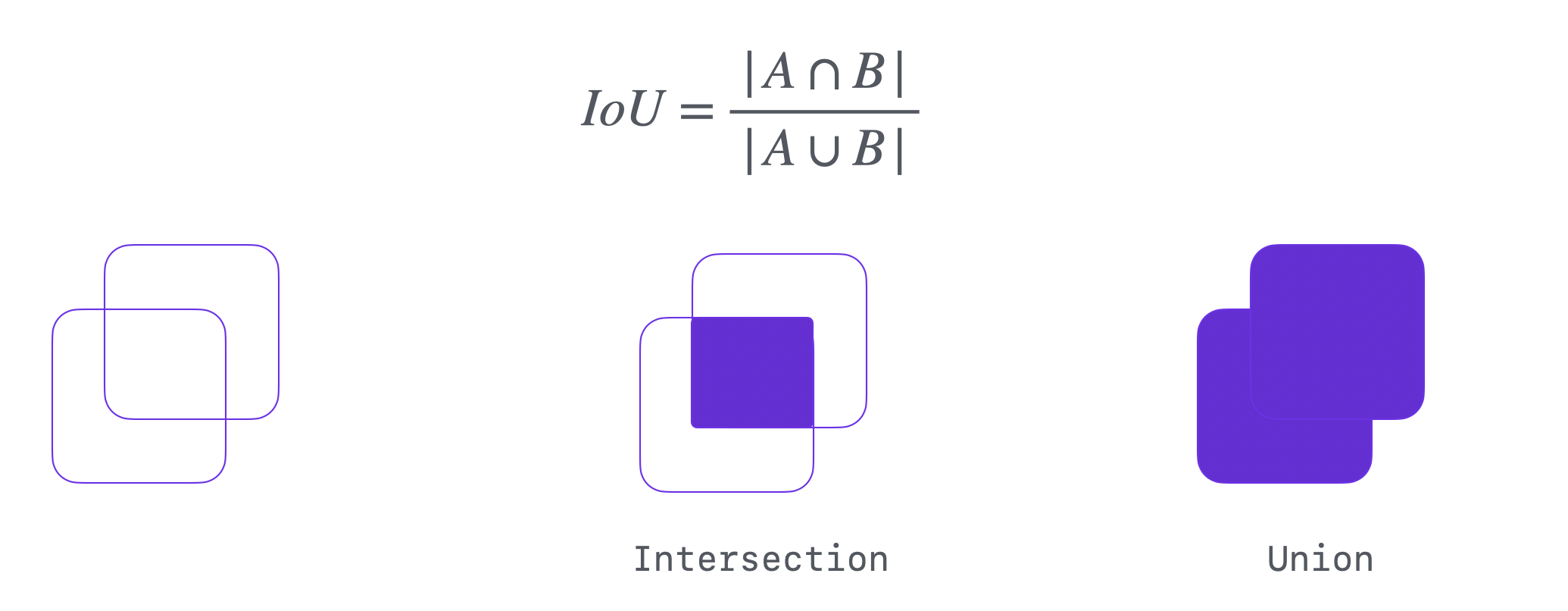

IoU (Intersection over Union)

$IoU = \frac{\text{Area of Overlap}}{\text{Area of Union}}$

Dice Coefficient

$\text{Dice} = \frac{2 \times \text{Intersection}}{\text{Area of Prediction + Area of Ground Truth}} = \frac{2 \times IoU}{1 + IoU}$

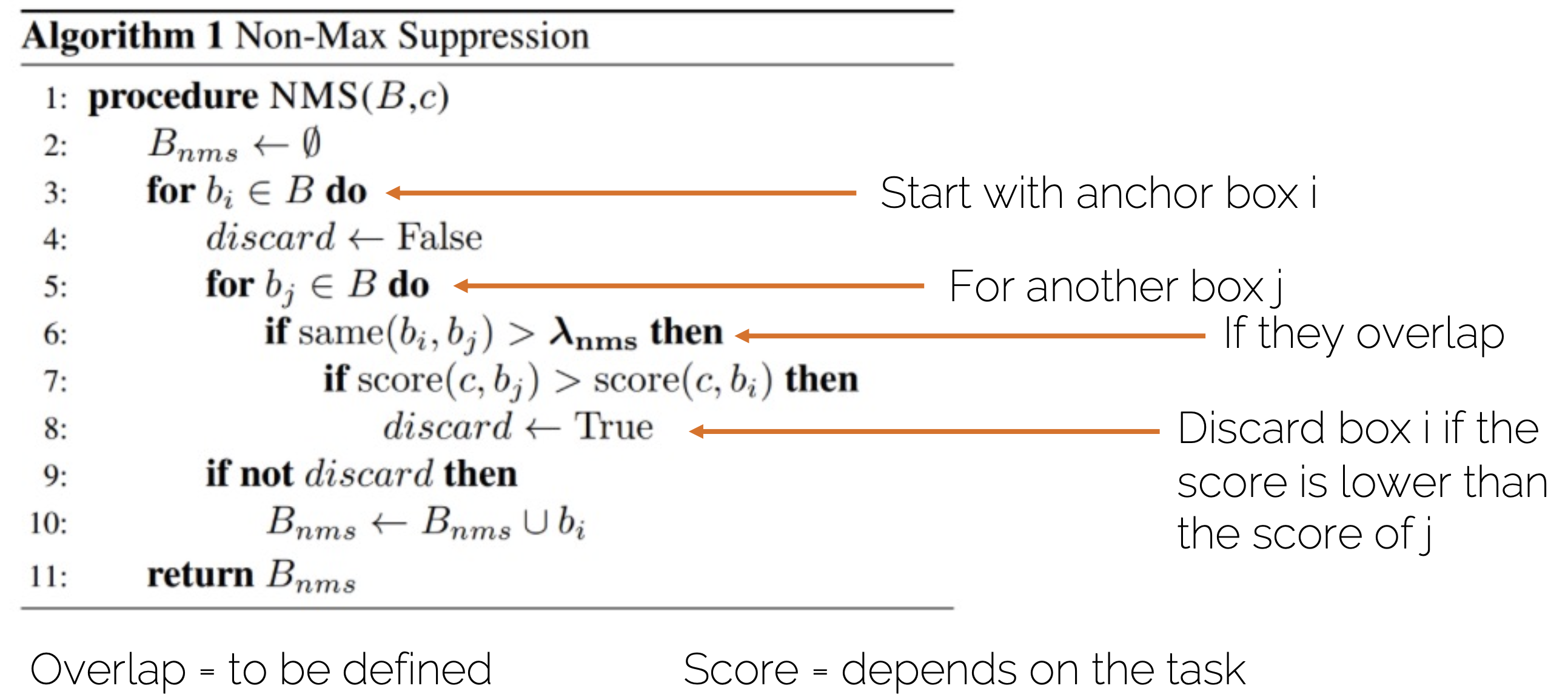

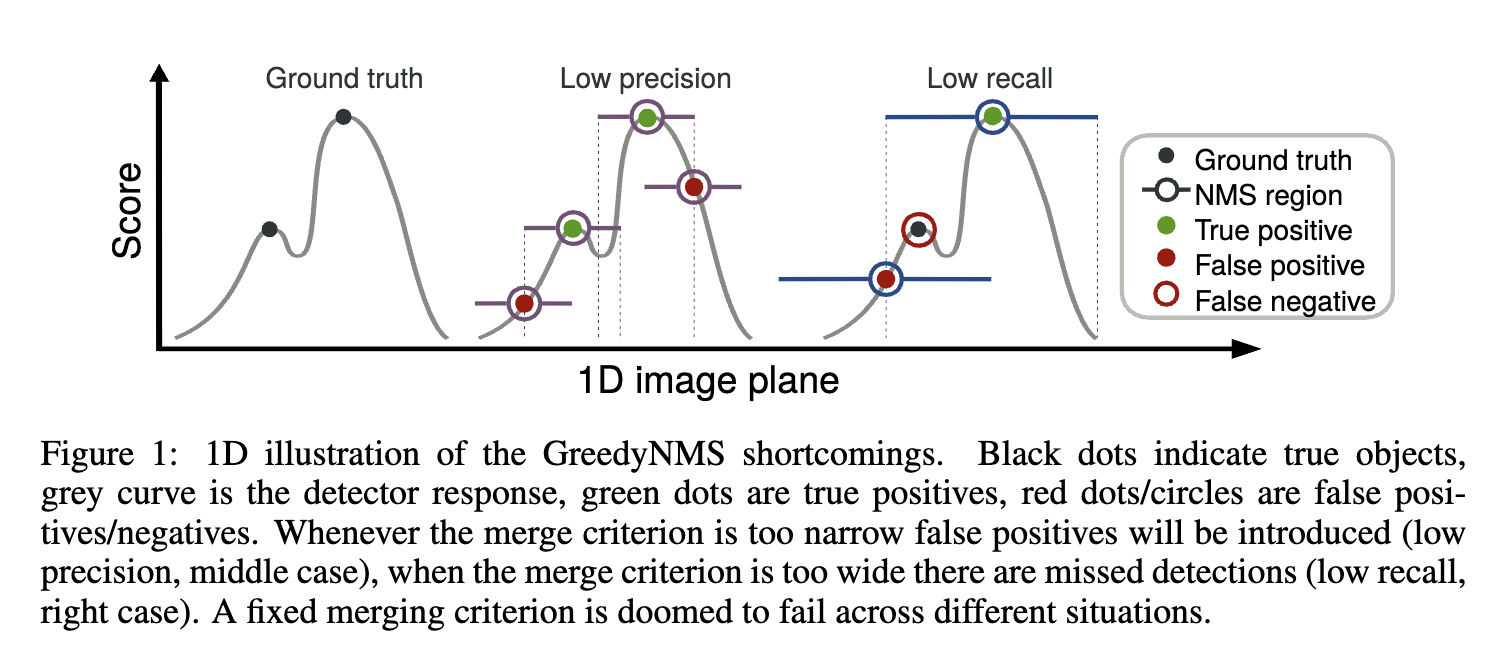

Non-Maximum Suppression (NMS)

- Narrow Threshold (High IoU): Low Precision (More FP)

- Wide Threshold (Low IoU): Low Recall (More FN)

See also: $\lambda_{NMS}$ for learned NMS.